Overview :

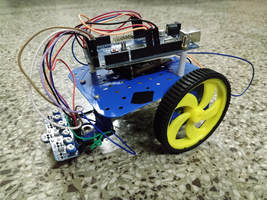

An autonomous robot which can solve mazes of black lines on white background (or vice versa). Maze can have dead ends and intersections.

Hardware :

Working :

The maze solver uses the "Left Hand Algorithm" i.e. "by keeping one hand in contact with one wall of the maze the solver is guaranteed not to get lost and will reach a different exit if there is one".

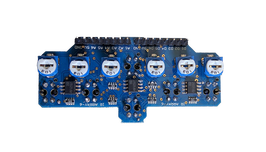

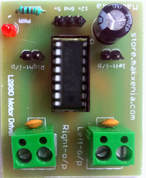

The Arduino receives input signal from the manually calibrated IR sensor array. It then interprets this signal and determines the position of the line w.r.t. the bot and determines the appropriate speed and direction of rotation for the DC motors. It sends the appropriate signal to the motor drivers which then control the motor.

The motor speed is varied using PWM and line following is done using proportional control. Proportional control means assigning each sensor some weights depending on it's position and calculating the error i.e. sum of products of weights and sensor inputs. Then, speed proportional to the error is applied to the motors to obtain smooth line following.

Sharp turns could not be achieved using proportional control, so they were handled separately.

The Arduino receives input signal from the manually calibrated IR sensor array. It then interprets this signal and determines the position of the line w.r.t. the bot and determines the appropriate speed and direction of rotation for the DC motors. It sends the appropriate signal to the motor drivers which then control the motor.

The motor speed is varied using PWM and line following is done using proportional control. Proportional control means assigning each sensor some weights depending on it's position and calculating the error i.e. sum of products of weights and sensor inputs. Then, speed proportional to the error is applied to the motors to obtain smooth line following.

Sharp turns could not be achieved using proportional control, so they were handled separately.

Checkout our code on GitHub

Team Members

Akshay Kulkarni

Aniket Gujarathi

Project done for Autobot competition in Axis '18, Technical Festival of VNIT, Nagpur.

Akshay Kulkarni

Aniket Gujarathi

Project done for Autobot competition in Axis '18, Technical Festival of VNIT, Nagpur.